[New!] I will join NVIDIA Research as a research intern in Summer 2026.

I am a second-year Ph.D. student in Computer Science & Engineering

at University of California San Diego, working

with Prof. Julian McAuley on tackling

challenges in today’s multimodal large language model. Before that, I earned BS in

Software Engineering from South China University of Technology

and MS in Computer Science from Zhejiang University. I am recognized as a 🎖 Notable Reviewer at ICLR.

I had also spent time as a research scientist intern at Meta GenAI mentored by Zecheng He.

For a complete list of publications, please visit my Google Scholar.

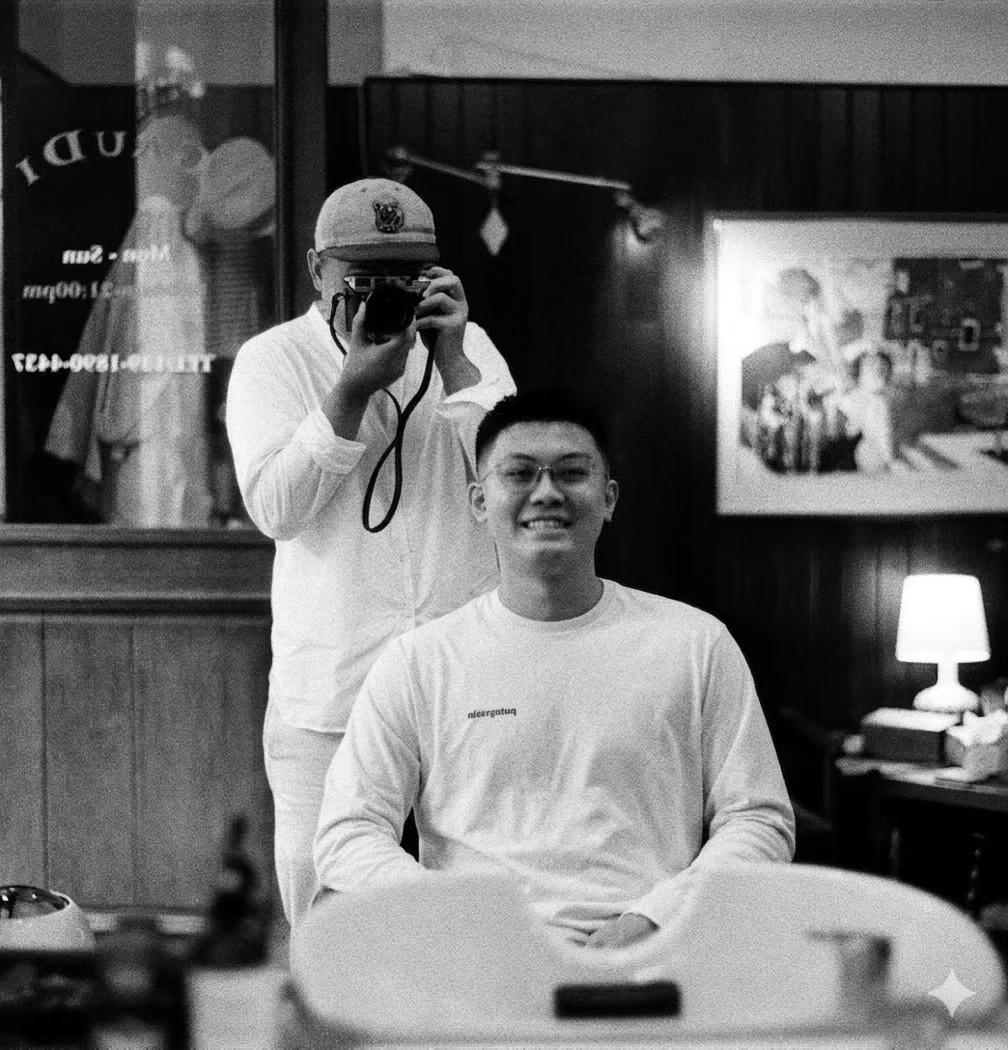

In my spare time, I am an avid reader, a dedicated foodie on a mission to find the best local eats, and a travel at heart. Find me on Instagram for a glimpse into my adventures and quietly refined life!